Article written by Rowena Merritt, Director, National Social Marketing Centre

Here is Edward Bear, coming downstairs, now, bump, bump, bump, on the back of his head behind Christopher Robin. It is as far as he knows the only way of coming downstairs, but somewhere he feels there is another way, if only he could stop for a moment and think it.

A. A. Milne

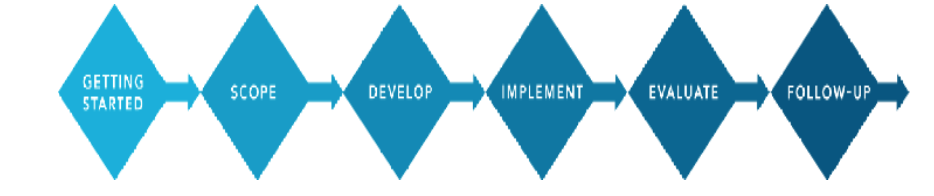

When developing your social marketing project, you should follow a systematic and staged approach, instead of just jumping in (Pooh Bear style). I have talked about the six stages of the NSMC’s planning process in past blogs. The stages are:

- Getting started

- Scoping

- Development

- Implementation

- Evaluation

- Follow-up

Reminders of what needs to be done at each stage is detailed on the NSMC’s website (https://www.thensmc.com/toolkit).

A really important stage, but one that is often overlooked or considered as an afterthought, is evaluation. The evaluation of your project will show you if you have been successful and, if not, what you could learn to adjust it or learn for similar projects in the future. I will be honest, I have never worked on a social marketing project where my whole intervention mix has worked perfectly first time; there are always elements which have worked well, and ones that have not worked as well. The key is to understand what has not worked, and why to improve and avoid duplication of mistakes.

Although technically evaluation is listed at stage five in the planning process, it is important that we consider this stage early on in the project in order to ensure we can identify and agree measures to demonstrate effectiveness, and that appropriate data is captured and recorded throughout the implementation stage.

Types of evaluation

There are five main types of evaluation:

- Formative – Undertaken during project development to help shape what you deliver. For example testing your intervention mix with the target audience.

- Process – During and after your project to measure how your project is working, identify what went well, how this happened and how it might be improved during the project and in the future. Examples include client satisfaction surveys, mass media monitoring and telephone helpline data.

- Outcome– To measure what happened as a result of what you have done. An outcome should be a finite measurable change, for example, increase in the number of people who know how often they should exercise, the number of people reporting to exercise, and so on.

- Impact – This refers to a much broader effect, for example, reduction in BMI rates. Impact usually can be conceptualised as the longer term effect of an outcome.

- Economic– This looks at the value for money of your project. This is not easy to do, but there are tools available to help you think through this type of evaluation: https://www.thensmc.com/resources/vfm

Developing your evaluation plan

It is important to work closely with your team and key stakeholders to develop an evaluation plan that is robust and will provide useful data whilst also maximising resources. The NSMC identifies that, in order to provide value, you need to ensure that the design of the evaluation and subsequent evaluation activities will be:

- Useful – does the evaluation serve a purpose? Will it be responsive to stakeholder information needs?

- Feasible – what time, resources and expertise are available to you?

- Accurate – what information do you need to make your decisions?

- Ethical – will the evaluation cause harm or distress to your target audience?

It is likely that you will include both quantitative and qualitative research methods to increase the reliability of your results. As discussed in my last blog, data collection methods are usually either ‘quantitative’ (e.g. questionnaires, street surveys, web-based surveys, etc.) or ‘qualitative’ (e.g. focus groups, paired and individual in-depth interviews, etc.). While quantitative research provides ‘hard’ reliable evidence (‘what and how much’), qualitative research helps you gain a more in-depth understanding and answers the how and why type questions. An example of a simple and easy to use evaluation plan template is given below.

|

|

Objective: Reduce number of sugar/salty snacks primary school children eat |

Objective |

Objective |

|

What measure? |

Number of snacks purchased |

|

|

|

Who for? |

Parents with primary school aged children |

|

|

|

How to measure? |

Retailer exit interviews with parents Electrical point of sale data Survey looking at self reported snacking behaviour of primary school aged children |

|

|

|

When? |

Baseline (i.e. very start of project or just before), mid point and end of project |

|

|

|

Resource needed? |

Agency resource for exit interviews |

|

|

|

Analysis methods |

SNAP software to produce results analysis |

|

|

Using different data sources to improve the validity of your findings

Determining the impact of a social marketing project is always challenging as you will have more than one intervention (you will have a mix of interventions, as discussed in in previous blog), and you will be most likely implementing in a ‘noisy’ environment – i.e. your project will not be delivered in isolation, there will be other projects going on to try and get people to eat better, expertise more, etc. This makes it difficult to attribute impact to your project. However, to help you do this more effectively, where possible you should triangulate your data sources and findings. Triangulation is research refers to using various data sources to cross-validate study findings, where a multi-method approach is employed to capture what has happened. For example, if a reduction in BMI in school-aged children is your main objective, you might look at: exercise and eating habits through a self-reported survey, rates of BMI, and in-depth qualitative interviews with your target audience to explore attitudes and knowledge around the topic area.

However, ultimately, what methods you use is often based on resources, so you have to be pragmatic. You will always have to make choices about, and limit, the number of variables that you will be able to measure.

Realistic expectations

Achieving sustained behaviour change is difficult, and you need to be realistic in what shifts in behaviour you can actually achieve. A past review[1]which included U.S., European and developing world interventions, and which used meta analytic methods to estimate the average effect sizes for media campaigns, found the following average effect sizes on behaviour change (the effects reported were the changes in behaviour from pre intervention to post intervention):

- Seatbelt campaigns -15%

- Dental care - 13%

- Adult alcohol reduction - 11%

- Family planning - 6%

- Youth smoking prevention - 6%

- Heart disease reduction (which include nutrition and physical activity) - 5%

- Sexual risk taking - 4%

- Mammography screening - 4%

- Adult smoking prevention - 4%

- Youth alcohol prevention and cessation - 4-7%

- Youth drug and marijuana campaigns -1-2%

Across all these studies Snyder found that targeted behaviors increased above baseline by an average of about 5% shift in behaviour. Although it was not clear if that behaviour change was sustained.

Final thoughts

Evaluation is important, but at the same time, you have to be pragmatic. You will always have to make choices about and limit the number of variables that you will be able to measure. And if your whole intervention mix is only costing £10,000, then you do not want to be spending more than 10% of that on evaluation. Some of your stakeholders may hold information that will be useful for evaluation, so you may be able to save money that way.

Finally, you need to remain objective and impartial. I often find this difficult when my own projects are being evaluated, as they are ‘my babies’ and I want the findings to be positive. But remember, learning what didn’t work as planned, is as important as working out what did work. Even if you just see a small shift in behaviour, take that as a win. Social marketers and behavioural scientists across the globe know that changing people’s behaviour is difficult – so take pride in the wins, even if they are smaller than you had hoped for!

[1]Snyder LB Health communication campaigns and their impact on behavior.J Nutr Educ Behav. 2007 Mar-Apr;39(2 Suppl):S32-40. Review